In my work across capability frameworks, learning systems, and workforce analytics, skills are everywhere — and almost always doing too much work. Skills-based hiring, skills inference, skills clouds, skills graphs. The language is familiar, but the way skills are used is often incoherent.

What I consistently see is this: skills are treated as a universal solution when they’re actually a very specific tool. Even more confusion surrounds skills taxonomies, which are regularly mistaken for frameworks, development models, or proxies for performance.

This article clarifies what skills and skills taxonomies actually are, why they’re so frequently conflated with other constructs, and the jobs they genuinely support — and those they don’t.

How Skills Are Commonly Defined

When we look to authoritative sources, definitions of skills are surprisingly aligned.

The SFIA Foundation defines a skill as the ability to apply knowledge and develop proficiency in performing tasks, often demonstrated in controlled or learning environments. OECD treats skills as discrete, observable units that can be described and compared across roles and labour markets. Similarly, DigComp frames skills as the capacity to apply knowledge to achieve results.

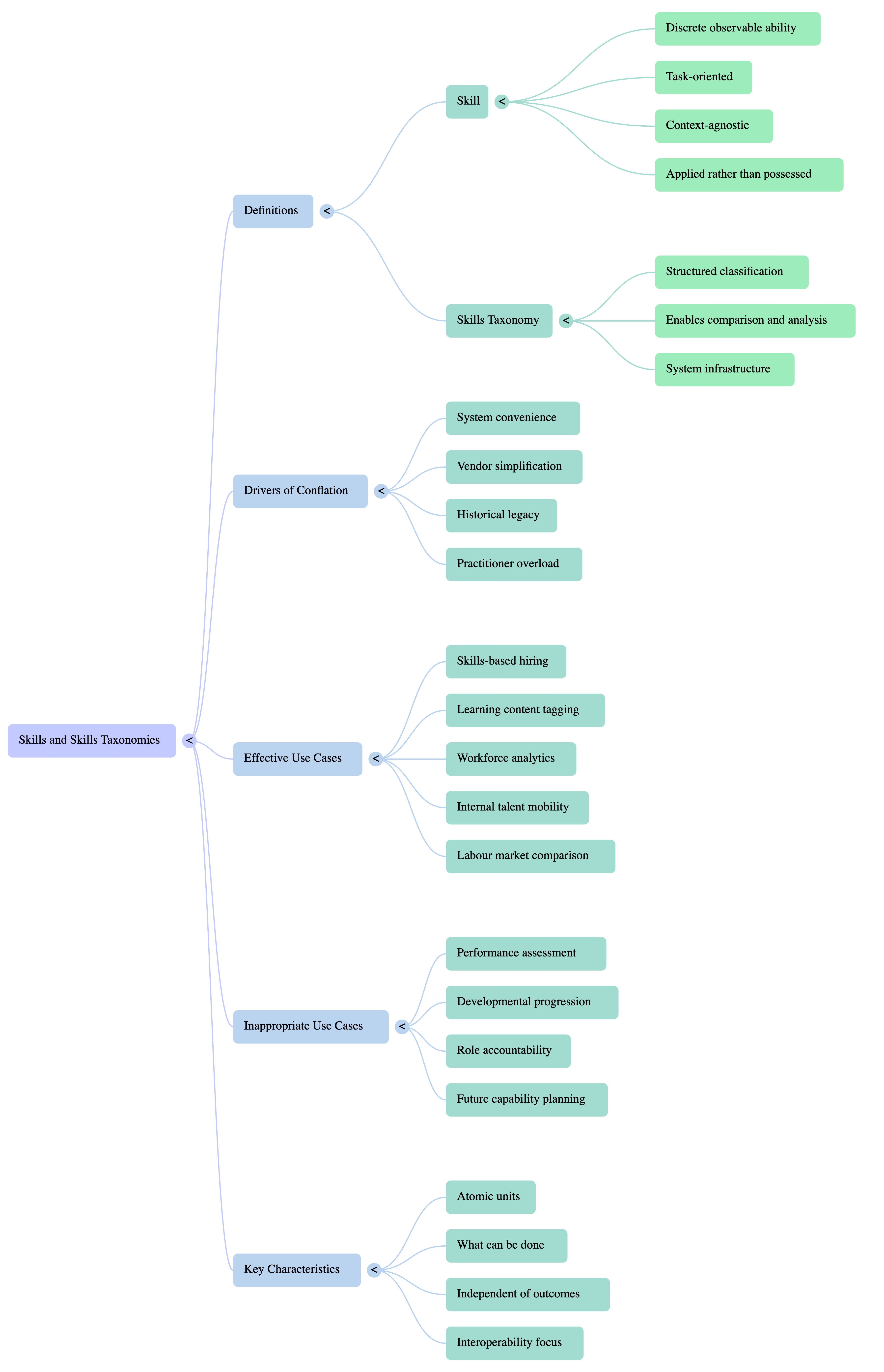

Across these definitions, a consistent pattern appears. Skills are:

- applied rather than possessed,

- task-oriented,

- observable in execution.

From what I see, skills are deliberately atomic. They describe what someone can do, not how well they do it, why they do it, or how it contributes to outcomes in a specific organisational context.

What the Dictionary Gets Right — and What It Misses

Most dictionary definitions describe a skill as the ability to do something well. That’s broadly accurate, but insufficient for workforce design.

It gets two things right. Skills are abilities, and they relate to action.

What it misses — and where HR systems struggle — is that skills are context-agnostic. They say nothing about standards, accountability, outcomes, or integration with other skills. A person can have a skill without being effective, reliable, or ready.

In practice, I see organisations assume that listing skills is the same as defining capability. It isn’t.

Why Skills Get So Heavily Conflated in HR

Skills don’t fail because they’re weak constructs. They fail because they’re overloaded.

Several structural forces drive this:

- System convenience: Skills are easy to store, tag, infer, and analyse. That makes them attractive as a default data unit, even when they’re the wrong abstraction.

- Vendor simplification: “Skills-based” is easier to sell than “multi-layered workforce architecture”.

- Historical overlap: In vocational and compliance settings, skills, competencies, and standards were tightly coupled — a legacy many organisations still carry.

- Practitioner overload: Skills get stretched to describe development, performance, potential, and readiness — jobs they were never designed to do.

This isn’t sloppy practice. It’s what happens when a precise tool is used as a catch-all.

The Problem Skills Were Originally Designed to Solve

Skills exist to solve comparability.

In my work, skills are most powerful when they’re used to:

- compare roles across organisations,

- match people to tasks,

- align learning content to requirements,

- enable labour market analysis at scale.

Skills answer the question:

What activities does this role or person need to be able to perform?

They were never designed to answer:

- how well those activities are performed,

- whether they meet organisational standards,

- how they combine to deliver outcomes,

- how they evolve over time.

When skills are pushed into those roles, confusion follows.

How Skills and Skills Taxonomies Are Used in HR Today

Across organisations I work with, skills appear everywhere:

- Recruitment and screening

- Learning content tagging

- Internal talent marketplaces

- Workforce analytics and AI inference

Skills taxonomies — such as those from the OECD or providers like Lightcast — provide the structure that makes this possible. They classify skills into categories, hierarchies, and relationships so systems can operate at scale.

This works well when the goal is interoperability, data consistency, and insight.

It breaks down when taxonomies are treated as development frameworks, when proficiency is inferred without context, or when skills lists are mistaken for role accountability.

A Clear, Practical Definition of Skills and Skills Taxonomies (My Position)

Based on both standards and applied work, this is the definition I use:

A skill is a discrete, observable ability to perform a task or activity, independent of organisational context or performance standard.

A skills taxonomy is a structured classification of skills and knowledge domains that enables consistent description, comparison, and analysis across roles, systems, and labour markets.

Skills describe what can be done.

Skills taxonomies organise that information so humans and machines can work with it.

They are infrastructure — not strategy.

What This Definition Supports — and What It Doesn’t

Used correctly, skills and skills taxonomies support:

- skills-based hiring and screening,

- learning content discovery and tagging,

- workforce analytics and AI inference,

- internal mobility and adjacency insights.

They are poorly suited for:

- performance assessment,

- developmental progression,

- role standards and accountability,

- future capability planning.

When organisations try to make skills do these jobs, they end up recreating competencies or capabilities — usually badly.

Why Getting This Right Actually Matters

Skills form the data layer of modern workforce systems. When treated as such, they enable scale, interoperability, and insight. When treated as substitutes for judgement, context, or strategy, they create false confidence.

From what I see, precision here isn’t pedantry — it’s infrastructure.

Get skills right, and everything above them works better.

Get them wrong, and no amount of frameworks will compensate.